Google Translate is biased, and here's how we can fix it!

April 11, 2021

8 min read

That’s right, Google Translate is biased. When it comes to assigning gender from a gender-neutral language like Hungarian, it defaults to the stereotypical translations. But why does it do this? And how can we fix this?

I’m Mukul, and I studied this topic as part of one of my Master’s modules at the University of Cambridge so if you’re looking for a solid introduction to bias in neural machine translation research this is the post for you.

Overview

We’ll look at:

- Where bias in machine translation comes from

- Why the current solution breaks down

- How we use datasets like WinoMT to measure bias in translation systems

- How we can fix the data

- And if you stick around all the way to the end, we’ll talk about the approach I think does the best job fixing models: domain adaptation

Bias

Modern machine learning techniques, whether that’s word embeddings or large language models all derive meaning from large corpuses of text by learning the statistical patterns in their training data. So if the data is biased, our models too will be biased.

Before continuing, I want to clarify a couple of things:

- Dataset bias isn’t the only reason machine learning models are biased - the models themselves can be biased, and anywhere else where you make a decision about how you model your data.

- Dataset bias comes from a range of sources, from the speakers to the curators. I’ve made a previous video addressing this where I talked about data statements that can help identify sources of bias.

In this post, we’re tackling cisnormative bias (that’s male-female gender bias). Trouble is that gender bias isn’t always obvious. However, it most prominently shows up when the model has to “fill in gender” when it comes to translating between languages that have varying amounts of gender in their grammar.

Here in this tweet from 2017, we’re translating from Turkish, which is a gender-neutral language. Google Translate filled in genders based on stereotypes. Pretty worrying right?

This wasn’t a one-off, in fact in September 2018, a paper was published analysing the extent of the bias in Google Translate across a whole host of gender-neutral languages. The authors analysed bias in translations for different occupations and the proportion of the workforce that were female, using data from the U.S. Bureau of Labor Statistics. They found that Google Translate amplified the gender imbalances, mostly defaulting to male translations.

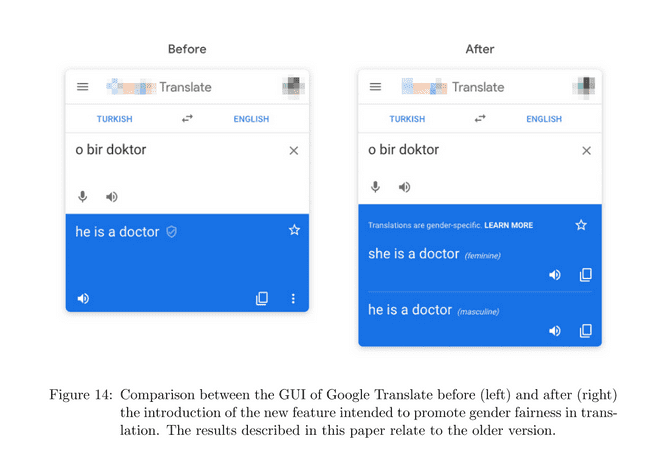

So in response, Google announced an update to Google Translate, where they would instead display both masculine and feminine translations. All solved right?

The authors in the paper certainly thought so, saying “machine learning translation tools can be debiased, dropping the need for resorting to a balanced training set.” This didn’t age well!

Pipelines aren’t the solution

Google Translate’s solution patched the output of the translation through a 3 step pipeline:

Detection: train a classifier to determine whether a given query is gender-ambiguous or not.

Generation: generate masculine and feminine translations for the query

Validation: verify that the two translations are high quality before showing them to users.

This has two main flaws:

- First, we need to train this detection classifier for each source language, which is data intensive and doesn’t scale for adding more languages!

- Second, it had lots of false negatives: didn’t show gender-specific translations for up to 40% of eligible queries, because the two translations often weren’t exactly equivalent.

Okay, so Google went back to the drawing board, and they redid the pipeline, as discussed in this blog post in 2020.

Now the pipeline is:

Generate the default translation

If gendered, rewrite to alternative translation using another language model.

Check if the rewritten translation is accurate.

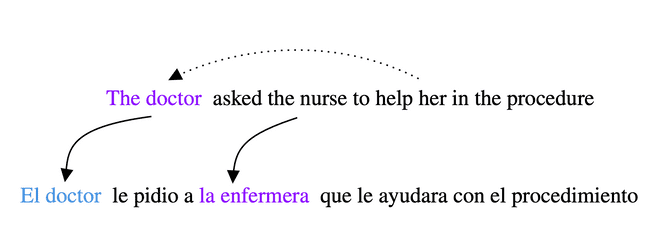

This breaks down too with pretty simple edge cases, as this English to Spanish translation shows.

This is a hard problem, and I don’t want to dismiss the work done by the team but personally I feel like monkey-patching the model isn’t the way to go as you’ll still end up with edge cases.

First, the model is still biased, even if you can’t see it in the output of Google Translate.

Second, we build up intellectual debt: the more models we add to our pipeline, the harder it becomes to debug and understand, as the models interact with each other in weird and wonderful ways. Check out this talk by Neil Lawrence to learn more about intellectual debt.

Evaluating Gender Bias in Machine Translation

Right so if you’re still with me, we’ve outlined what the issue is, and why the current approach doesn’t work. I now want to shift the focus to research that could help reduce bias.

First, we’ve got to identify bias in our machine translation models.

The challenge set for this is WinoMT introduced by Stanovsky et al. This challenge contains a dataset of sentences where we have nouns whose gender can be identified by other pronouns in the sentence.

For example, in this sentence below the doctor is referred to as her, so we know the doctor is female. However the machine translation to Spanish defaults to the male form of doctor, and translates nurse to the female form, despite there being no information in the sentence to infer the nurse’s gender.

The challenge works as follows:

- We get our model to translate the English sentences into another target language, like Spanish or German

- We then align the source and target sentences, so “the doctor” lines up with “el doctor”, and “the nurse” lines up with “la enfermera”.

- We then determine the gender from the target noun - this is language-specific, in this case, we can tell the gender from “el” and “la”.

We evaluate this gender against the actual gender annotations, and measure the accuracy of the gendering by the system.

However, accuracy doesn’t tell the full story, so we measure two additional metrics ∆G and ∆S.

∆G denotes the difference in performance (F1 score) between masculine and feminine entities

∆S is the difference in performance (F1 score) between pro-stereotypical and anti-stereotypical gender role assignment

We’d like ∆G and ∆S to be close to 0. A large positive ∆G means the model performs much better on male entities, and a large positive ∆S means the model does better translating stereotypes.

And it’s worth noting that we need to look at these metrics together - obviously accuracy doesn’t reveal bias but equally ∆S could be zero for a system that always predicts male defaults, as it will get both the pro and anti stereotypical examples for females wrong, and likewise get both right for males.

Okay, now we have our metrics and challenge set, let’s discuss how you might fix this.

Start with the Data

It’s no surprise that to reduce bias we need curated balanced datasets. I also think we need to go one step further and annotate our datasets. I don’t just mean on a dataset level, using Data Statements or Data Sheets, but on an individual sentence level.

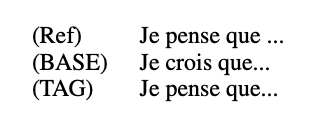

In the paper Getting Gender Right in Neural Machine Translation, the authors tag the speaker gender to the sentences, which resulted in improved gender translation. The model trained on the tagged sentences was even in some cases able not just get the correct gender, but also the correct word choice.

I personally feel that tagging sentences in our curated dataset will help us provide cleaner signals to the translation model, rather than leaving it to infer its own model of gender.

There is just one problem though. How do you curate a dataset on the scale we’re using to train large language models with millions and millions of examples? Curation is really manpower intensive. Furthermore, to create a balanced dataset, you have to either

- upsample the minority group

- downsample the majority group

- or counterfactually augment your data to avoid the model picking up bias.

(If you have a sentence “He is a doctor”, the counterfactual would be “She is a doctor”.)

Straightforward for English right? But NLP ≠ English! This counterfactual augmentation is much harder for other languages like German, so one way of crudely approximating this is translating the gender-swapped counterfactual English sentences using the translation system. It’s not perfect, as this translation will contain some biases, but it’s somewhat better than before.

Reducing Gender Bias in Neural Machine Translation as a Domain Adaptation Problem

Domain adaptation (linked paper) is the final piece of the jigsaw in this post. Rather than training on a large somewhat-debiased dataset, we take an already trained model and fine-tune it on a small handcrafted set of trusted, gender-balanced examples.

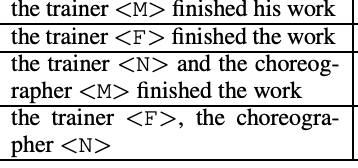

The handcrafted dataset is of the form:

- The [PROFESSION] finished [his|her] work.

In total we select 194 professions, giving just 388 sentences in a gender-balanced set, which is small enough that we can verify. We remove professions that occur in WinoMT to prevent menorisation of vocab from affecting our accuracy, dropping us down to 216 sentences. Then we add adjective-based sentences to get back up to 388 examples.

- The [adjective] [man|woman] finished [his|her] work.

And honestly you might be thinking, these sentences are really simple, and 388 sentences is tiny, but actually this outperforms fine-tuning on the larger noisy counterfactually-balanced dataset by a huge amount.

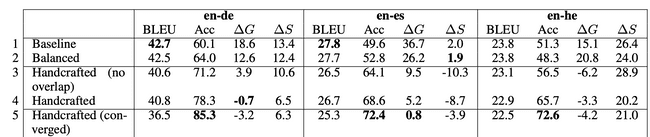

In this table, baseline refers to the biased model, balanced refers to the counterfactually-balanced dataset. We can see that finetuning on the handcrafted dataset results in improved accuracy on WinoMT. For German it’s going up from 60.1 to 78.3%, and ∆G and ∆S also dropping to near enough 0.

You might be looking at the table and wondering, wait the BLEU score, which measures overall translation quality, is much worse?

The overall translation quality goes down because of catastrophic forgetting - fine-tuning on the small dataset means the model forgets about other language concepts it was originally trained on. There’s a trade-off between how much you debias by fine-tuning versus the translation quality.

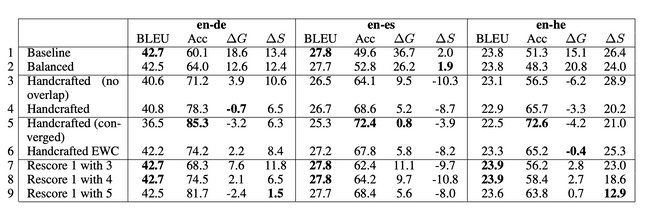

There are a couple of techniques we can use to address this. The first is Elastic Weight Consolidation. This adds a regularisation term to our loss function that penalises the weights from changing too much, and thus forgetting the original task. But because some weights are more important than others, we penalise the more important weights more, hence the term elastic.

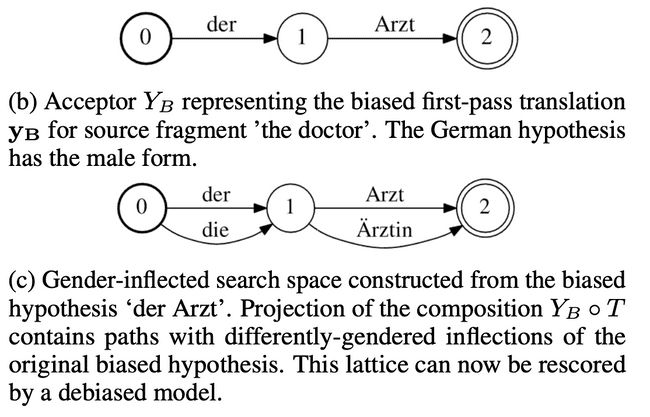

The second is lattice rescoring. If our original model translated the sentence as:

He is a doctor.

because it defaults to males, then to correct for bias, our debiased model should produce:

She is a doctor.

What we don’t want is for our debiased model to mistranslate doctor to something else, like:

She is a cat.

To prevent this from happening, we limit the alternative predictions the debiased model can make to those that just vary in gender assigned.

And now we have the best of both worlds:

Line 8 of the table shows what happens when we use lattice rescoring with our handcrafted model and the baseline: we keep the same BLEU score of 42.7 and get the higher accuracy on WinoMT and lower ∆G and ∆S.

Let’s combine data and domain adaptation

Finally, I want to say the techniques we’ve discussed can be combined together. For example there’s a follow-up paper that noticed that machine translation systems default to giving the same gender to all entities in a sentence. So to fix this, they create a handcrafted dataset where they tag the sentences with gender, and then use domain adaptation to fine-tune the model.

Pretty exciting if you ask me.

Summary

I want to wrap up and say that I don’t think there’s going to be a point where we’ve solved bias, but that it’s a journey. Just because your model performs well on WinoMT, doesn’t mean it isn’t biased. It’s just like how computer vision models trained on ImageNet won’t always perform well in the real world.

I am however excited to hear that NeurIPS now has a special track for benchmarks and datasets, so the future looks to be bright. We could do with datasets that go beyond just looking at bias in occupations, so this field can have its ImageNet moment.